The first parameter is the name of the container to attach and the second one the final digit of the MAC/IP addresses. We can use it on docker1: docker1:~$ docker run -d -net=none -name=demo debian sleep 3600

If you use the terraform stack from the github repository, there is a helper shell script to attach the container to the overlay.

DOCKER NETWORK TYPE MAC

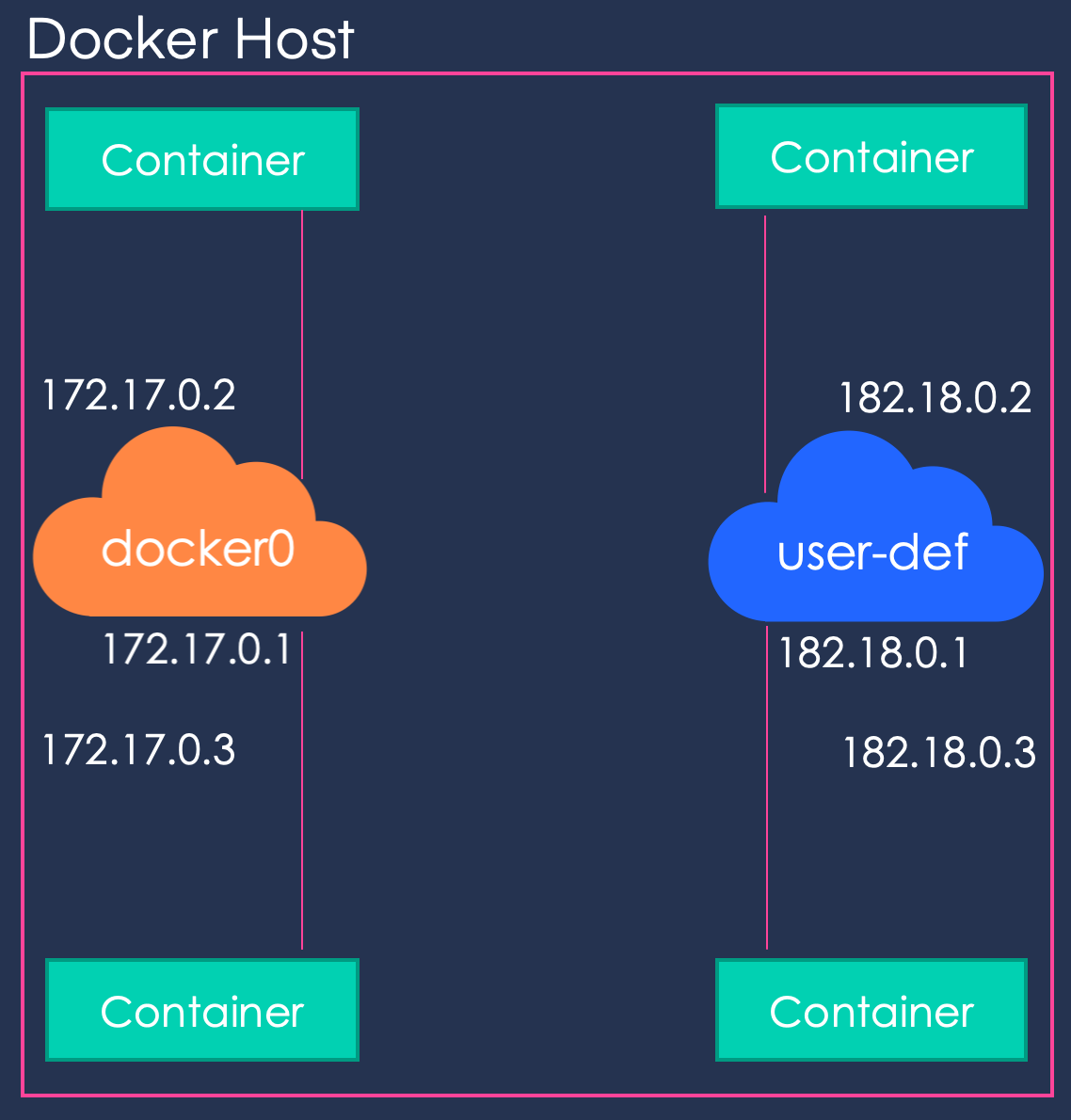

We have to do the same on docker1 with different MAC and IP addresses (02:42:c0:a8:00:03 and 192.168.0.3). We used the same addressing schem as Docker: the last 4 bytes of the MAC address match the IP address of the container and the second one is the VXLAN id. The symbolic link in /var/run/netns is required so we can use the native ip netns commands (to move the interface to the container network namespace). docker0:~$ ctn_ns_path=$(docker inspect -format="ĭocker0:~$ sudo ln -sf $ctn_ns_path /var/run/netns/$ctn_nsĭocker0:~$ sudo ip link set dev veth2 netns $ctn_nsĭocker0:~$ sudo ip netns exec $ctn_ns ip link set dev veth2 name eth0 address 02:42:c0:a8:00:02ĭocker0:~$ sudo ip netns exec $ctn_ns ip addr add dev eth0 192.168.0.2/24ĭocker0:~$ sudo ip netns exec $ctn_ns ip link set dev eth0 upĭocker0:~$ sudo rm /var/run/netns/$ctn_ns We can find it by inspecting the container. We will need the path of the network namespace for this container. First, we create a container: docker0:~$ docker run -d -net=none -name=demo debian sleep 3600 Now we will create containers and connect them to our bridge. Once we have run these commands on both docker0 and docker1, here is what we have: If we had created the interface inside the namespace (like we did for br0) we would not have been able to send traffic outside the namespace. This is necessary so the VXLAN interface can keep a link with our main host interface and send traffic over the network. Notice that we did not create the VXLAN interface inside the namespace but on the host and then moved it to the namespace.

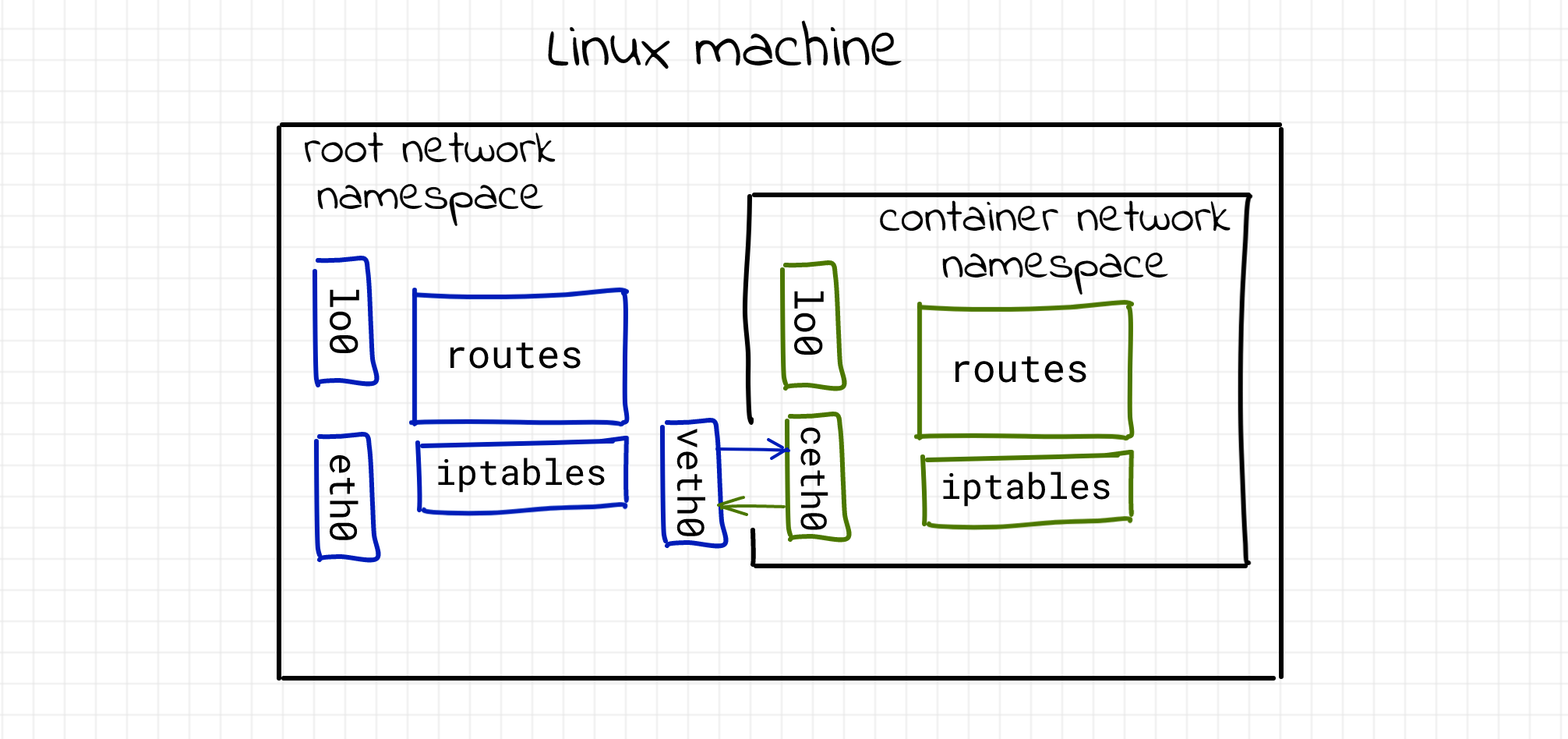

We will discuss the learning option later in this post. The proxy option allows the vxlan interface to answer ARP queries (we have seen it in part 2). We configured it to use VXLAN id 42 and to tunnel traffic on the standard VXLAN port. The most important command so far is the creation of the VXLAN interface. The next step is to create a VXLAN interface and attach it to the bridge: docker0:~$ sudo ip link add dev vxlan1 type vxlan id 42 proxy learning dstport 4789ĭocker0:~$ sudo ip link set vxlan1 netns overnsĭocker0:~$ sudo ip netns exec overns ip link set vxlan1 master br0ĭocker0:~$ sudo ip netns exec overns ip link set vxlan1 up Now we are going to create a bridge in this namespace, give it an IP address and bring the interface up: docker0:~$ sudo ip netns exec overns ip link add dev br0 type bridgeĭocker0:~$ sudo ip netns exec overns ip addr add dev br0 192.168.0.1/24ĭocker0:~$ sudo ip netns exec overns ip link set br0 up The first thing we are going to do now is to create an network namespace called “overns”: sudo ip netns add overns If you have tried the commands from the first two posts, you need to clean-up your Docker hosts by removing all our containers and the overlay network: docker0:~$ docker rm -f $(docker ps -aq) In this third post, we will see how we can create our own overlay with standard Linux commands. In part 2 we have looked in details at how Docker uses VXLAN to tunnel traffic between the hosts in the overlay. In part 1 of this blog post we have seen how Docker creates a dedicated namespace for the overlay and connect the containers to this namespace. Temps de lecture : 18 minutes Introduction